Drawit, a Drawable User Interface

This project is a novel approach using a drawable user interface to address the massive increase in connected devices and the customization of these interfaces. It explores the capabilities of drawing to interact with connected devices and how they empower users to define the functions of their systems based on their personal, local and often task-specifics needs.

Process Overview

The idea

In everyday life, users often have to interact with different devices for specific tasks. These devices are increasingly connected to the Internet, meaning their functionalities and capabilities increase substantially. While it is true that this growth allows users to have a more personal, rich, and customized experience, the experience is undermined by the standard user interfaces of these devices.

Drawit is a novel approach to allow users to design a user interface based on their task-specific needs by drawing. Users can define the majority of the drawable language.

Interactions

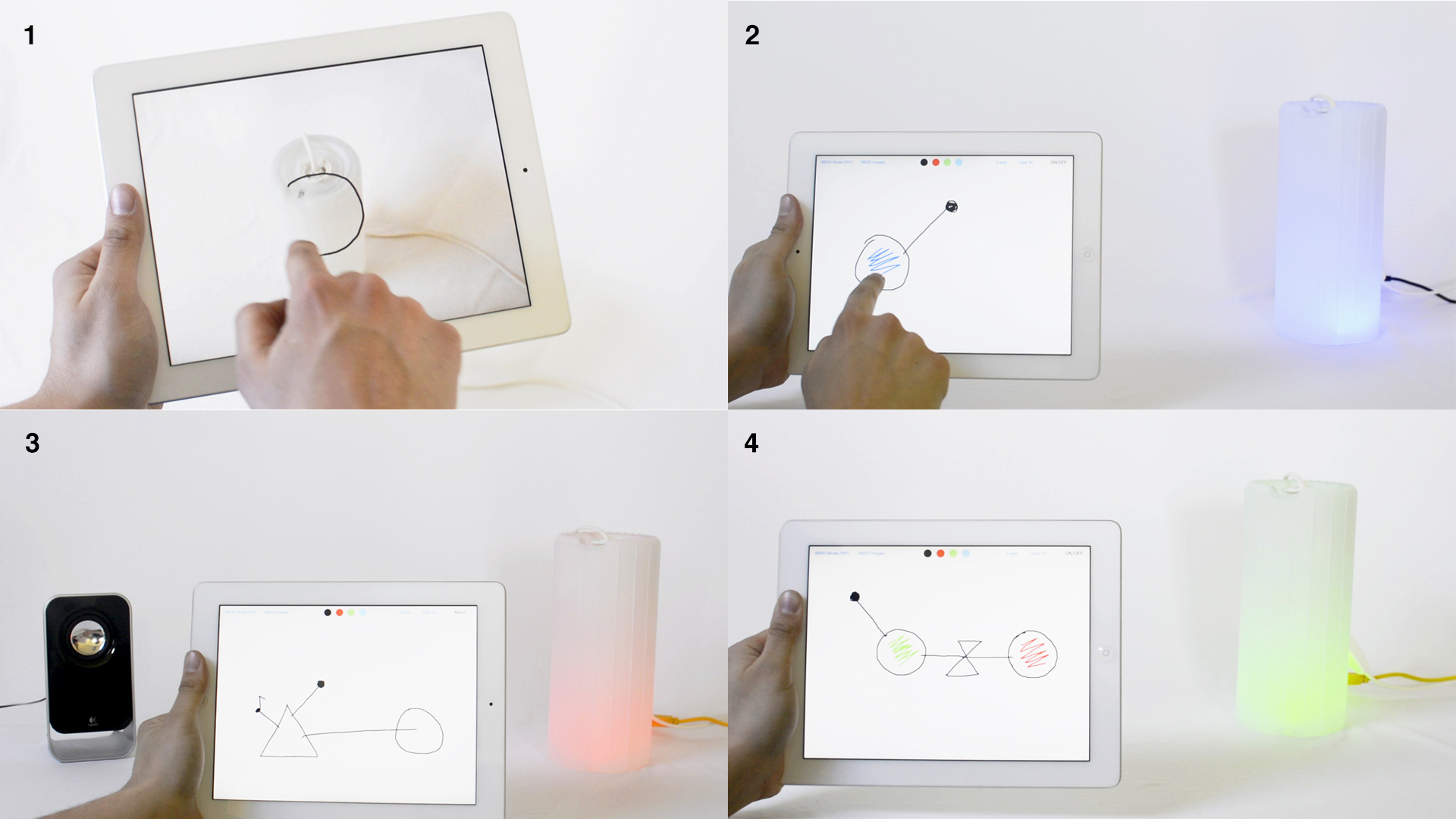

A single device or multiple connected devices may be desired when having to accomplish specific tasks. The drawable language gives the user the freedom to create interfaces to interact with devices. Different interactions have been developed:

Users can define the representation of the object by pointing the tablet's camera at the object and drawing the shape.

The system now recognizes the shape and knows what object represents. Users are able to draw different functionalities starting from an empty canvas.

Multiple objects can be connected to each other creating an interaction that adapts to its context.

Different programming elements have been integrated to let the user to program basic interactions.

Design

As soon as I came up with the concept, I first made fake prototypes to see how the interaction would feel, as well as to start having discussions about what it would mean to have a shape-defined user interface for objects.

Once I was able to test different interactions and see different scenarios, I defined the syntax of this drawable language. The idea was to create a scalable, customizable, and high-performance visual language to allow end users to define and tailor the functions of their systems.

The drawable language has three elements: 1) objects, 2) actions, and 3) relations. Objects represent physical devices. Actions define functionalities that can be executed when connected to an object. Finally, a relation represents the connection between an object and an action, or between two objects. Different touch gestures have also been used, like tapping and zooming, in order to present a shortcut to execute an action into an object.

Implementation

I developed the app using Objective-C and Xcode 7 for iPad 2. The communication between devices was initially implemented with Wi-Fi, and later with Bluetooth. The image recognition system behind the drawing recognition was implemented from scratch using pattern recognition (k-NN and Euclidean distances). Here you can see some initial prototypes:

Contribution and Role in the Project

I designed, developed, and tested the whole project. This project is my final bachelor's thesis in La Salle - Universitat Ramon Llull. It was developed as part of my research in the Seamless Interaction Group, having David Miralles as my advisor.

Publications, Prizes, and Exhibitions/Press

An academic research paper has been published at ACM CHI 2016: M. Exposito, and D. Miralles. Interacting with Connecting Devices through a Drawable User Interface (see here)

It has been awarded with the prize $10K Global Technology 2016 by MIT Enterprise Forum and it has been finalist of the Innovation By Design 2016 by FastCo Design, Student Category.

The following exhibitions and press have talked about Drawit: FastCo Design, La Vanguardia, Substraction, LS:N Global - The Future Laboratory, Sonar +D 2015, CCCB +Humans